Table of Contents

- I. Getting started

- II. User guide

- 6. Buildroot configuration

- 7. Configuration of other components

- 8. General Buildroot usage

- 8.1. make tips

- 8.2. Understanding when a full rebuild is necessary

- 8.3. Understanding how to rebuild packages

- 8.4. Offline builds

- 8.5. Building out-of-tree

- 8.6. Environment variables

- 8.7. Dealing efficiently with filesystem images

- 8.8. Details about packages

- 8.9. Generating CycloneDX SBOM

- 8.10. Graphing the dependencies between packages

- 8.11. Graphing the build duration

- 8.12. Graphing the filesystem size contribution of packages

- 8.13. Top-level parallel build

- 8.14. Advanced usage

- 9. Project-specific customization

- 9.1. Recommended directory structure

- 9.2. Keeping customizations outside of Buildroot

- 9.3. Storing the Buildroot configuration

- 9.4. Storing the configuration of other components

- 9.5. Customizing the generated target filesystem

- 9.6. Adding custom user accounts

- 9.7. Customization after the images have been created

- 9.8. Adding project-specific patches and hashes

- 9.9. Adding project-specific packages

- 9.10. Quick guide to storing your project-specific customizations

- 10. Integration topics

- 11. Frequently Asked Questions & Troubleshooting

- 11.1. The boot hangs after Starting network…

- 11.2. Why is there no compiler on the target?

- 11.3. Why are there no development files on the target?

- 11.4. Why is there no documentation on the target?

- 11.5. Why are some packages not visible in the Buildroot config menu?

- 11.6. Why not use the target directory as a chroot directory?

- 11.7. Why doesn’t Buildroot generate binary packages (.deb, .ipkg…)?

- 11.8. How to speed-up the build process?

- 11.9. How does Buildroot support Y2038?

- 12. Known issues

- 13. Legal notice and licensing

- 14. Beyond Buildroot

- III. Developer guide

- 15. How Buildroot works

- 16. Coding style

- 17. Adding support for a particular board

- 18. Adding new packages to Buildroot

- 18.1. Package directory

- 18.2. Config files

- 18.3. The

.mkfile - 18.4. The

.hashfile - 18.5. The

SNNfoostart script - 18.6. Infrastructure for packages with specific build systems

- 18.7. Infrastructure for autotools-based packages

- 18.8. Infrastructure for CMake-based packages

- 18.9. Infrastructure for Python packages

- 18.10. Infrastructure for LuaRocks-based packages

- 18.11. Infrastructure for Perl/CPAN packages

- 18.12. Infrastructure for virtual packages

- 18.13. Infrastructure for packages using kconfig for configuration files

- 18.14. Infrastructure for rebar-based packages

- 18.15. Infrastructure for Waf-based packages

- 18.16. Infrastructure for Meson-based packages

- 18.17. Infrastructure for Cargo-based packages

- 18.18. Infrastructure for Go packages

- 18.19. Infrastructure for QMake-based packages

- 18.20. Infrastructure for packages building kernel modules

- 18.21. Infrastructure for asciidoc documents

- 18.22. Infrastructure specific to the Linux kernel package

- 18.23. Hooks available in the various build steps

- 18.24. Gettext integration and interaction with packages

- 18.25. Tips and tricks

- 18.26. Conclusion

- 19. Patching a package

- 20. Download infrastructure

- 21. Debugging Buildroot

- 22. Contributing to Buildroot

- 23. DEVELOPERS file and get-developers

- 24. Release Engineering

- IV. Appendix

List of Examples

Buildroot 2026.05-rc1 manual generated on 2026-05-16 10:17:02 UTC from git revision 6b1b1d6380

The Buildroot manual is written by the Buildroot developers. It is licensed under the GNU General Public License, version 2. Refer to the COPYING file in the Buildroot sources for the full text of this license.

Copyright © The Buildroot developers <buildroot@buildroot.org>

Buildroot is a tool that simplifies and automates the process of building a complete Linux system for an embedded system, using cross-compilation.

In order to achieve this, Buildroot is able to generate a cross-compilation toolchain, a root filesystem, a Linux kernel image and a bootloader for your target. Buildroot can be used for any combination of these options, independently (you can for example use an existing cross-compilation toolchain, and build only your root filesystem with Buildroot).

Buildroot is useful mainly for people working with embedded systems. Embedded systems often use processors that are not the regular x86 processors everyone is used to having in his PC. They can be PowerPC processors, MIPS processors, ARM processors, etc.

Buildroot supports numerous processors and their variants; it also comes with default configurations for several boards available off-the-shelf. Besides this, a number of third-party projects are based on, or develop their BSP [1] or SDK [2] on top of Buildroot.

Buildroot is designed to run on Linux systems.

While Buildroot itself will build most host packages it needs for the compilation, certain standard Linux utilities are expected to be already installed on the host system. Below you will find an overview of the mandatory and optional packages (note that package names may vary between distributions).

Build tools:

-

which -

sed -

make(version 3.81 or any later) -

binutils -

build-essential(only for Debian based systems) -

diffutils -

gcc(version 4.8 or any later) -

g++(version 4.8 or any later) -

bash -

patch -

gzip -

bzip2 -

perl(version 5.8.7 or any later) -

tar -

cpio -

unzip -

rsync -

file(must be in/usr/bin/file) -

bc -

findutils -

awk

-

Source fetching tools:

-

wget

-

Recommended dependencies:

Some features or utilities in Buildroot, like the legal-info, or the graph generation tools, have additional dependencies. Although they are not mandatory for a simple build, they are still highly recommended:

-

python(version 2.7 or any later)

-

Configuration interface dependencies:

For these libraries, you need to install both runtime and development data, which in many distributions are packaged separately. The development packages typically have a -dev or -devel suffix.

-

ncurses5to use the menuconfig interface -

qt5to use the xconfig interface -

glib2,gtk2andglade2to use the gconfig interface

-

Source fetching tools:

In the official tree, most of the package sources are retrieved using

wgetfrom ftp, http or https locations. A few packages are only available through a version control system. Moreover, Buildroot is capable of downloading sources via other tools, likegitorscp(refer to Chapter 20, Download infrastructure for more details). If you enable packages using any of these methods, you will need to install the corresponding tool on the host system:-

bazaar -

curl -

cvs -

git -

mercurial -

scp -

sftp -

subversion

-

Java-related packages, if the Java Classpath needs to be built for the target system:

-

The

javaccompiler -

The

jartool

-

The

Documentation generation tools:

-

asciidoc, version 8.6.3 or higher -

w3m -

pythonwith theargparsemodule (automatically present in 2.7+ and 3.2+) -

dblatex(required for the pdf manual only)

-

Graph generation tools:

-

graphvizto use graph-depends and <pkg>-graph-depends -

python-matplotlibto use graph-build

-

Package statistics tools (pkg-stats):

-

python-aiohttp

-

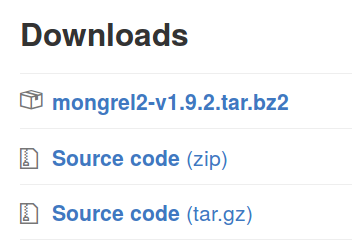

Buildroot releases are made every 3 months, in February, May, August and November. Release numbers are in the format YYYY.MM, so for example 2013.02, 2014.08.

Release tarballs are available at https://buildroot.org/downloads/.

For your convenience, a Vagrantfile is

available in support/misc/Vagrantfile in the Buildroot source tree

to quickly set up a virtual machine with the needed dependencies to

get started.

If you want to setup an isolated buildroot environment on Linux or Mac Os X, paste this line onto your terminal:

curl -O https://buildroot.org/downloads/Vagrantfile; vagrant up

If you are on Windows, paste this into your powershell:

(new-object System.Net.WebClient).DownloadFile( "https://buildroot.org/downloads/Vagrantfile","Vagrantfile"); vagrant up

If you want to follow development, you can use the daily snapshots or make a clone of the Git repository. Refer to the Download page of the Buildroot website for more details.

Important: you can and should build everything as a normal user. There is no need to be root to configure and use Buildroot. By running all commands as a regular user, you protect your system against packages behaving badly during compilation and installation.

The first step when using Buildroot is to create a configuration. Buildroot has a nice configuration tool similar to the one you can find in the Linux kernel or in BusyBox.

From the buildroot directory, run

$ make menuconfig

for the original curses-based configurator, or

$ make nconfig

for the new curses-based configurator, or

$ make xconfig

for the Qt-based configurator, or

$ make gconfig

for the GTK-based configurator.

All of these "make" commands will need to build a configuration utility (including the interface), so you may need to install "development" packages for relevant libraries used by the configuration utilities. Refer to Chapter 2, System requirements for more details, specifically the optional requirements to get the dependencies of your favorite interface.

For each menu entry in the configuration tool, you can find associated help that describes the purpose of the entry. Refer to Chapter 6, Buildroot configuration for details on some specific configuration aspects.

Once everything is configured, the configuration tool generates a

.config file that contains the entire configuration. This file will be

read by the top-level Makefile.

To start the build process, simply run:

$ make

By default, Buildroot does not support top-level parallel build, so

running make -jN is not necessary. There is however experimental

support for top-level parallel build, see

Section 8.13, “Top-level parallel build”.

The make command will generally perform the following steps:

- download source files (as required);

- configure, build and install the cross-compilation toolchain, or simply import an external toolchain;

- configure, build and install selected target packages;

- build a kernel image, if selected;

- build a bootloader image, if selected;

- create a root filesystem in selected formats.

Buildroot output is stored in a single directory, output/.

This directory contains several subdirectories:

-

images/where all the images (kernel image, bootloader and root filesystem images) are stored. These are the files you need to put on your target system. -

build/where all the components are built (this includes tools needed by Buildroot on the host and packages compiled for the target). This directory contains one subdirectory for each of these components. -

host/contains both the tools built for the host, and the sysroot of the target toolchain. The former is an installation of tools compiled for the host that are needed for the proper execution of Buildroot, including the cross-compilation toolchain. The latter is a hierarchy similar to a root filesystem hierarchy. It contains the headers and libraries of all user-space packages that provide and install libraries used by other packages. However, this directory is not intended to be the root filesystem for the target: it contains a lot of development files, unstripped binaries and libraries that make it far too big for an embedded system. These development files are used to compile libraries and applications for the target that depend on other libraries. -

staging/is a symlink to the target toolchain sysroot insidehost/, which exists for backwards compatibility. -

target/which contains almost the complete root filesystem for the target: everything needed is present except the device files in/dev/(Buildroot can’t create them because Buildroot doesn’t run as root and doesn’t want to run as root). Also, it doesn’t have the correct permissions (e.g. setuid for the busybox binary). Therefore, this directory should not be used on your target. Instead, you should use one of the images built in theimages/directory. If you need an extracted image of the root filesystem for booting over NFS, then use the tarball image generated inimages/and extract it as root. Compared tostaging/,target/contains only the files and libraries needed to run the selected target applications: the development files (headers, etc.) are not present, the binaries are stripped.

These commands, make menuconfig|nconfig|gconfig|xconfig and make, are the

basic ones that allow to easily and quickly generate images fitting

your needs, with all the features and applications you enabled.

More details about the "make" command usage are given in Section 8.1, “make tips”.

Like any open source project, Buildroot has different ways to share information in its community and outside.

Each of those ways may interest you if you are looking for some help, want to understand Buildroot or contribute to the project.

- Mailing List

Buildroot has a mailing list for discussion and development. It is the main method of interaction for Buildroot users and developers.

Only subscribers to the Buildroot mailing list are allowed to post to this list. You can subscribe via the mailing list info page.

Mails that are sent to the mailing list are also available in the mailing list archives, available through Mailman or at lore.kernel.org.

- IRC

The Buildroot IRC channel #buildroot is hosted on OFTC. It is a useful place to ask quick questions or discuss on certain topics.

When asking for help on IRC, share relevant logs or pieces of code using a code sharing website, such as https://paste.ack.tf/.

Note that for certain questions, posting to the mailing list may be better as it will reach more people, both developers and users.

- Bug tracker

- Bugs in Buildroot can be reported via the mailing list or alternatively via the Buildroot bugtracker. Please refer to Section 22.6, “Reporting issues/bugs or getting help” before creating a bug report.

- Wiki

- The Buildroot wiki page is hosted on the eLinux wiki. It contains some useful links, an overview of past and upcoming events, and a TODO list.

- Patchwork

Patchwork is a web-based patch tracking system designed to facilitate the contribution and management of contributions to an open-source project. Patches that have been sent to a mailing list are 'caught' by the system, and appear on a web page. Any comments posted that reference the patch are appended to the patch page too. For more information on Patchwork see http://jk.ozlabs.org/projects/patchwork/.

Buildroot’s Patchwork website is mainly for use by Buildroot’s maintainer to ensure patches aren’t missed. It is also used by Buildroot patch reviewers (see also Section 22.3.1, “Applying Patches from Patchwork”). However, since the website exposes patches and their corresponding review comments in a clean and concise web interface, it can be useful for all Buildroot developers.

The Buildroot patch management interface is available at https://patchwork.ozlabs.org/project/buildroot/list/.

All the configuration options in make *config have a help text

providing details about the option.

The make *config commands also offer a search tool. Read the help

message in the different frontend menus to know how to use it:

-

in menuconfig, the search tool is called by pressing

/; -

in xconfig, the search tool is called by pressing

Ctrl+f.

The result of the search shows the help message of the matching items. In menuconfig, numbers in the left column provide a shortcut to the corresponding entry. Just type this number to directly jump to the entry, or to the containing menu in case the entry is not selectable due to a missing dependency.

Although the menu structure and the help text of the entries should be sufficiently self-explanatory, a number of topics require additional explanation that cannot easily be covered in the help text and are therefore covered in the following sections.

A compilation toolchain is the set of tools that allows you to compile

code for your system. It consists of a compiler (in our case, gcc),

binary utils like assembler and linker (in our case, binutils) and a

C standard library (for example

GNU Libc,

uClibc-ng).

The system installed on your development station certainly already has a compilation toolchain that you can use to compile an application that runs on your system. If you’re using a PC, your compilation toolchain runs on an x86 processor and generates code for an x86 processor. Under most Linux systems, the compilation toolchain uses the GNU libc (glibc) as the C standard library. This compilation toolchain is called the "host compilation toolchain". The machine on which it is running, and on which you’re working, is called the "host system" [3].

The compilation toolchain is provided by your distribution, and Buildroot has nothing to do with it (other than using it to build a cross-compilation toolchain and other tools that are run on the development host).

As said above, the compilation toolchain that comes with your system runs on and generates code for the processor in your host system. As your embedded system has a different processor, you need a cross-compilation toolchain - a compilation toolchain that runs on your host system but generates code for your target system (and target processor). For example, if your host system uses x86 and your target system uses ARM, the regular compilation toolchain on your host runs on x86 and generates code for x86, while the cross-compilation toolchain runs on x86 and generates code for ARM.

Buildroot provides two solutions for the cross-compilation toolchain:

-

The internal toolchain backend, called

Buildroot toolchainin the configuration interface. -

The external toolchain backend, called

External toolchainin the configuration interface.

The choice between these two solutions is done using the Toolchain

Type option in the Toolchain menu. Once one solution has been

chosen, a number of configuration options appear, they are detailed in

the following sections.

The internal toolchain backend is the backend where Buildroot builds by itself a cross-compilation toolchain, before building the userspace applications and libraries for your target embedded system.

This backend supports several C libraries: uClibc-ng, glibc and musl.

Once you have selected this backend, a number of options appear. The most important ones allow to:

-

Change the version of the Linux kernel headers used to build the

toolchain. This item deserves a few explanations. In the process of

building a cross-compilation toolchain, the C library is being

built. This library provides the interface between userspace

applications and the Linux kernel. In order to know how to "talk"

to the Linux kernel, the C library needs to have access to the

Linux kernel headers (i.e. the

.hfiles from the kernel), which define the interface between userspace and the kernel (system calls, data structures, etc.). Since this interface is backward compatible, the version of the Linux kernel headers used to build your toolchain do not need to match exactly the version of the Linux kernel you intend to run on your embedded system. They only need to have a version equal or older to the version of the Linux kernel you intend to run. If you use kernel headers that are more recent than the Linux kernel you run on your embedded system, then the C library might be using interfaces that are not provided by your Linux kernel. - Change the version of the GCC compiler, binutils and the C library.

-

Select a number of toolchain options (uClibc only): whether the

toolchain should have RPC support (used mainly for NFS),

wide-char support, locale support (for internationalization),

C++ support or thread support. Depending on which options you choose,

the number of userspace applications and libraries visible in

Buildroot menus will change: many applications and libraries require

certain toolchain options to be enabled. Most packages show a comment

when a certain toolchain option is required to be able to enable

those packages. If needed, you can further refine the uClibc

configuration by running

make uclibc-menuconfig. Note however that all packages in Buildroot are tested against the default uClibc configuration bundled in Buildroot: if you deviate from this configuration by removing features from uClibc, some packages may no longer build.

It is worth noting that whenever one of those options is modified, then the entire toolchain and system must be rebuilt. See Section 8.2, “Understanding when a full rebuild is necessary”.

Advantages of this backend:

- Well integrated with Buildroot

- Fast, only builds what’s necessary

Drawbacks of this backend:

-

Rebuilding the toolchain is needed when doing

make clean, which takes time. If you’re trying to reduce your build time, consider using the External toolchain backend.

The external toolchain backend allows to use existing pre-built cross-compilation toolchains. Buildroot knows about a number of well-known cross-compilation toolchains (from Linaro for ARM, Sourcery CodeBench for ARM, x86-64, PowerPC, and MIPS, and is capable of downloading them automatically, or it can be pointed to a custom toolchain, either available for download or installed locally.

Then, you have three solutions to use an external toolchain:

-

Use a predefined external toolchain profile, and let Buildroot

download, extract and install the toolchain. Buildroot already knows

about a few CodeSourcery and Linaro toolchains. Just select the

toolchain profile in

Toolchainfrom the available ones. This is definitely the easiest solution. -

Use a predefined external toolchain profile, but instead of having

Buildroot download and extract the toolchain, you can tell Buildroot

where your toolchain is already installed on your system. Just

select the toolchain profile in

Toolchainthrough the available ones, unselectDownload toolchain automatically, and fill theToolchain pathtext entry with the path to your cross-compiling toolchain. -

Use a completely custom external toolchain. This is particularly

useful for toolchains generated using crosstool-NG or with Buildroot

itself. To do this, select the

Custom toolchainsolution in theToolchainlist. You need to fill theToolchain path,Toolchain prefixandExternal toolchain C libraryoptions. Then, you have to tell Buildroot what your external toolchain supports. If your external toolchain uses the glibc library, you only have to tell whether your toolchain supports C++ or not and whether it has built-in RPC support. If your external toolchain uses the uClibc library, then you have to tell Buildroot if it supports RPC, wide-char, locale, program invocation, threads and C++. At the beginning of the execution, Buildroot will tell you if the selected options do not match the toolchain configuration.

Our external toolchain support has been tested with toolchains from CodeSourcery and Linaro, toolchains generated by crosstool-NG, and toolchains generated by Buildroot itself. In general, all toolchains that support the sysroot feature should work. If not, do not hesitate to contact the developers.

We do not support toolchains or SDK generated by OpenEmbedded or Yocto, because these toolchains are not pure toolchains (i.e. just the compiler, binutils, the C and C++ libraries). Instead these toolchains come with a very large set of pre-compiled libraries and programs. Therefore, Buildroot cannot import the sysroot of the toolchain, as it would contain hundreds of megabytes of pre-compiled libraries that are normally built by Buildroot.

We also do not support using the distribution toolchain (i.e. the gcc/binutils/C library installed by your distribution) as the toolchain to build software for the target. This is because your distribution toolchain is not a "pure" toolchain (i.e. only with the C/C++ library), so we cannot import it properly into the Buildroot build environment. So even if you are building a system for a x86 or x86_64 target, you have to generate a cross-compilation toolchain with Buildroot or crosstool-NG.

If you want to generate a custom toolchain for your project, that can be used as an external toolchain in Buildroot, our recommendation is to build it either with Buildroot itself (see Section 6.1.3, “Build an external toolchain with Buildroot”) or with crosstool-NG.

Advantages of this backend:

- Allows to use well-known and well-tested cross-compilation toolchains.

- Avoids the build time of the cross-compilation toolchain, which is often very significant in the overall build time of an embedded Linux system.

Drawbacks of this backend:

- If your pre-built external toolchain has a bug, may be hard to get a fix from the toolchain vendor, unless you build your external toolchain by yourself using Buildroot or Crosstool-NG.

The Buildroot internal toolchain option can be used to create an external toolchain. Here are a series of steps to build an internal toolchain and package it up for reuse by Buildroot itself (or other projects).

Create a new Buildroot configuration, with the following details:

- Select the appropriate Target options for your target CPU architecture

- In the Toolchain menu, keep the default of Buildroot toolchain for Toolchain type, and configure your toolchain as desired

- In the System configuration menu, select None as the Init system and none as /bin/sh

- In the Target packages menu, disable BusyBox

- In the Filesystem images menu, disable tar the root filesystem

Then, we can trigger the build, and also ask Buildroot to generate a SDK. This will conveniently generate for us a tarball which contains our toolchain:

make sdk

This produces the SDK tarball in $(O)/images, with a name similar to

arm-buildroot-linux-uclibcgnueabi_sdk-buildroot.tar.gz. Save this

tarball, as it is now the toolchain that you can re-use as an external

toolchain in other Buildroot projects.

In those other Buildroot projects, in the Toolchain menu:

- Set Toolchain type to External toolchain

- Set Toolchain to Custom toolchain

- Set Toolchain origin to Toolchain to be downloaded and installed

-

Set Toolchain URL to

file:///path/to/your/sdk/tarball.tar.gz

When using an external toolchain, Buildroot generates a wrapper program,

that transparently passes the appropriate options (according to the

configuration) to the external toolchain programs. In case you need to

debug this wrapper to check exactly what arguments are passed, you can

set the environment variable BR2_DEBUG_WRAPPER to either one of:

-

0, empty or not set: no debug -

1: trace all arguments on a single line -

2: trace one argument per line

On a Linux system, the /dev directory contains special files, called

device files, that allow userspace applications to access the

hardware devices managed by the Linux kernel. Without these device

files, your userspace applications would not be able to use the

hardware devices, even if they are properly recognized by the Linux

kernel.

Under System configuration, /dev management, Buildroot offers four

different solutions to handle the /dev directory :

-

The first solution is Static using device table. This is the old

classical way of handling device files in Linux. With this method,

the device files are persistently stored in the root filesystem

(i.e. they persist across reboots), and there is nothing that will

automatically create and remove those device files when hardware

devices are added or removed from the system. Buildroot therefore

creates a standard set of device files using a device table, the

default one being stored in

system/device_table_dev.txtin the Buildroot source code. This file is processed when Buildroot generates the final root filesystem image, and the device files are therefore not visible in theoutput/targetdirectory. TheBR2_ROOTFS_STATIC_DEVICE_TABLEoption allows to change the default device table used by Buildroot, or to add an additional device table, so that additional device files are created by Buildroot during the build. So, if you use this method, and a device file is missing in your system, you can for example create aboard/<yourcompany>/<yourproject>/device_table_dev.txtfile that contains the description of your additional device files, and then you can setBR2_ROOTFS_STATIC_DEVICE_TABLEtosystem/device_table_dev.txt board/<yourcompany>/<yourproject>/device_table_dev.txt. For more details about the format of the device table file, see Chapter 25, Makedev syntax documentation. -

The second solution is Dynamic using devtmpfs only. devtmpfs is

a virtual filesystem inside the Linux kernel that has been

introduced in kernel 2.6.32 (if you use an older kernel, it is not

possible to use this option). When mounted in

/dev, this virtual filesystem will automatically make device files appear and disappear as hardware devices are added and removed from the system. This filesystem is not persistent across reboots: it is filled dynamically by the kernel. Using devtmpfs requires the following kernel configuration options to be enabled:CONFIG_DEVTMPFSandCONFIG_DEVTMPFS_MOUNT. When Buildroot is in charge of building the Linux kernel for your embedded device, it makes sure that those two options are enabled. However, if you build your Linux kernel outside of Buildroot, then it is your responsibility to enable those two options (if you fail to do so, your Buildroot system will not boot). -

The third solution is Dynamic using devtmpfs + mdev. This method

also relies on the devtmpfs virtual filesystem detailed above (so

the requirement to have

CONFIG_DEVTMPFSandCONFIG_DEVTMPFS_MOUNTenabled in the kernel configuration still apply), but adds themdevuserspace utility on top of it.mdevis a program part of BusyBox that the kernel will call every time a device is added or removed. Thanks to the/etc/mdev.confconfiguration file,mdevcan be configured to for example, set specific permissions or ownership on a device file, call a script or application whenever a device appears or disappear, etc. Basically, it allows userspace to react on device addition and removal events.mdevcan for example be used to automatically load kernel modules when devices appear on the system.mdevis also important if you have devices that require a firmware, as it will be responsible for pushing the firmware contents to the kernel.mdevis a lightweight implementation (with fewer features) ofudev. For more details aboutmdevand the syntax of its configuration file, see http://git.busybox.net/busybox/tree/docs/mdev.txt. -

The fourth solution is Dynamic using devtmpfs + eudev. This

method also relies on the devtmpfs virtual filesystem detailed

above, but adds the

eudevuserspace daemon on top of it.eudevis a daemon that runs in the background, and gets called by the kernel when a device gets added or removed from the system. It is a more heavyweight solution thanmdev, but provides higher flexibility.eudevis a standalone version ofudev, the original userspace daemon used in most desktop Linux distributions, which is now part of Systemd. For more details, see http://en.wikipedia.org/wiki/Udev.

The Buildroot developers recommendation is to start with the Dynamic using devtmpfs only solution, until you have the need for userspace to be notified when devices are added/removed, or if firmwares are needed, in which case Dynamic using devtmpfs + mdev is usually a good solution.

Note that if systemd is chosen as init system, /dev management will

be performed by the udev program provided by systemd.

The init program is the first userspace program started by the kernel (it carries the PID number 1), and is responsible for starting the userspace services and programs (for example: web server, graphical applications, other network servers, etc.).

Buildroot allows to use three different types of init systems, which

can be chosen from System configuration, Init system:

-

The first solution is BusyBox. Amongst many programs, BusyBox has

an implementation of a basic

initprogram, which is sufficient for most embedded systems. Enabling theBR2_INIT_BUSYBOXwill ensure BusyBox will build and install itsinitprogram. This is the default solution in Buildroot. The BusyBoxinitprogram will read the/etc/inittabfile at boot to know what to do. The syntax of this file can be found in http://git.busybox.net/busybox/tree/examples/inittab (note that BusyBoxinittabsyntax is special: do not use a randominittabdocumentation from the Internet to learn about BusyBoxinittab). The defaultinittabin Buildroot is stored inpackage/busybox/inittab. Apart from mounting a few important filesystems, the main job the default inittab does is to start the/etc/init.d/rcSshell script, and start agettyprogram (which provides a login prompt). -

The second solution is systemV. This solution uses the old

traditional sysvinit program, packed in Buildroot in

package/sysvinit. This was the solution used in most desktop Linux distributions, until they switched to more recent alternatives such as Upstart or Systemd.sysvinitalso works with aninittabfile (which has a slightly different syntax than the one from BusyBox). The defaultinittabinstalled with this init solution is located inpackage/sysvinit/inittab. -

The third solution is systemd.

systemdis the new generation init system for Linux. It does far more than traditional init programs: aggressive parallelization capabilities, uses socket and D-Bus activation for starting services, offers on-demand starting of daemons, keeps track of processes using Linux control groups, supports snapshotting and restoring of the system state, etc.systemdwill be useful on relatively complex embedded systems, for example the ones requiring D-Bus and services communicating between each other. It is worth noting thatsystemdbrings a fairly big number of large dependencies:dbus,udevand more. For more details aboutsystemd, see http://www.freedesktop.org/wiki/Software/systemd.

The solution recommended by Buildroot developers is to use the BusyBox init as it is sufficient for most embedded systems. systemd can be used for more complex situations.

[3] This terminology differs from what is used by GNU configure, where the host is the machine on which the application will run (which is usually the same as target)

Before attempting to modify any of the components below, make sure you have already configured Buildroot itself, and have enabled the corresponding package.

- BusyBox

If you already have a BusyBox configuration file, you can directly specify this file in the Buildroot configuration, using

BR2_PACKAGE_BUSYBOX_CONFIG. Otherwise, Buildroot will start from a default BusyBox configuration file.To make subsequent changes to the configuration, use

make busybox-menuconfigto open the BusyBox configuration editor.It is also possible to specify a BusyBox configuration file through an environment variable, although this is not recommended. Refer to Section 8.6, “Environment variables” for more details.

- uClibc

- Configuration of uClibc is done in the same way as for BusyBox. The

configuration variable to specify an existing configuration file is

BR2_UCLIBC_CONFIG. The command to make subsequent changes ismake uclibc-menuconfig. - Linux kernel

If you already have a kernel configuration file, you can directly specify this file in the Buildroot configuration, using

BR2_LINUX_KERNEL_USE_CUSTOM_CONFIG.If you do not yet have a kernel configuration file, you can either start by specifying a defconfig in the Buildroot configuration, using

BR2_LINUX_KERNEL_USE_DEFCONFIG, or start by creating an empty file and specifying it as custom configuration file, usingBR2_LINUX_KERNEL_USE_CUSTOM_CONFIG.To make subsequent changes to the configuration, use

make linux-menuconfigto open the Linux configuration editor.- Barebox

- Configuration of Barebox is done in the same way as for the Linux

kernel. The corresponding configuration variables are

BR2_TARGET_BAREBOX_USE_CUSTOM_CONFIGandBR2_TARGET_BAREBOX_USE_DEFCONFIG. To open the configuration editor, usemake barebox-menuconfig. - U-Boot

- Configuration of U-Boot (version 2015.04 or newer) is done in the same

way as for the Linux kernel. The corresponding configuration variables

are

BR2_TARGET_UBOOT_USE_CUSTOM_CONFIGandBR2_TARGET_UBOOT_USE_DEFCONFIG. To open the configuration editor, usemake uboot-menuconfig.

This is a collection of tips that help you make the most of Buildroot.

Display all commands executed by make:

$ make V=1 <target>

Display the list of boards with a defconfig:

$ make list-defconfigs

Display all available targets:

$ make help

Not all targets are always available,

some settings in the .config file may hide some targets:

-

busybox-menuconfigonly works whenbusyboxis enabled; -

linux-menuconfigandlinux-savedefconfigonly work whenlinuxis enabled; -

uclibc-menuconfigis only available when the uClibc C library is selected in the internal toolchain backend; -

barebox-menuconfigandbarebox-savedefconfigonly work when thebareboxbootloader is enabled. -

uboot-menuconfiganduboot-savedefconfigonly work when theU-Bootbootloader is enabled and theubootbuild system is set toKconfig.

Cleaning: Explicit cleaning is required when any of the architecture or toolchain configuration options are changed.

To delete all build products (including build directories, host, staging and target trees, the images and the toolchain):

$ make clean

Generating the manual: The present manual sources are located in the docs/manual directory. To generate the manual:

$ make manual-clean $ make manual

The manual outputs will be generated in output/docs/manual.

Notes

- A few tools are required to build the documentation (see: Section 2.2, “Optional packages”).

Resetting Buildroot for a new target: To delete all build products as well as the configuration:

$ make distclean

Notes. If ccache is enabled, running make clean or distclean does

not empty the compiler cache used by Buildroot. To delete it, refer

to Section 8.14.3, “Using ccache in Buildroot”.

Dumping the internal make variables: One can dump the variables known to make, along with their values:

$ make -s printvars VARS='VARIABLE1 VARIABLE2' VARIABLE1=value_of_variable VARIABLE2=value_of_variable

It is possible to tweak the output using some variables:

-

VARSwill limit the listing to variables which names match the specified make-patterns - this must be set else nothing is printed -

QUOTED_VARS, if set toYES, will single-quote the value -

RAW_VARS, if set toYES, will print the unexpanded value

For example:

$ make -s printvars VARS=BUSYBOX_%DEPENDENCIES BUSYBOX_DEPENDENCIES=skeleton toolchain BUSYBOX_FINAL_ALL_DEPENDENCIES=skeleton toolchain BUSYBOX_FINAL_DEPENDENCIES=skeleton toolchain BUSYBOX_FINAL_PATCH_DEPENDENCIES= BUSYBOX_RDEPENDENCIES=ncurses util-linux

$ make -s printvars VARS=BUSYBOX_%DEPENDENCIES QUOTED_VARS=YES BUSYBOX_DEPENDENCIES='skeleton toolchain' BUSYBOX_FINAL_ALL_DEPENDENCIES='skeleton toolchain' BUSYBOX_FINAL_DEPENDENCIES='skeleton toolchain' BUSYBOX_FINAL_PATCH_DEPENDENCIES='' BUSYBOX_RDEPENDENCIES='ncurses util-linux'

$ make -s printvars VARS=BUSYBOX_%DEPENDENCIES RAW_VARS=YES BUSYBOX_DEPENDENCIES=skeleton toolchain BUSYBOX_FINAL_ALL_DEPENDENCIES=$(sort $(BUSYBOX_FINAL_DEPENDENCIES) $(BUSYBOX_FINAL_PATCH_DEPENDENCIES)) BUSYBOX_FINAL_DEPENDENCIES=$(sort $(BUSYBOX_DEPENDENCIES)) BUSYBOX_FINAL_PATCH_DEPENDENCIES=$(sort $(BUSYBOX_PATCH_DEPENDENCIES)) BUSYBOX_RDEPENDENCIES=ncurses util-linux

The output of quoted variables can be reused in shell scripts, for example:

$ eval $(make -s printvars VARS=BUSYBOX_DEPENDENCIES QUOTED_VARS=YES) $ echo $BUSYBOX_DEPENDENCIES skeleton toolchain

Buildroot does not attempt to detect what parts of the system should

be rebuilt when the system configuration is changed through make

menuconfig, make xconfig or one of the other configuration

tools. In some cases, Buildroot should rebuild the entire system, in

some cases, only a specific subset of packages. But detecting this in

a completely reliable manner is very difficult, and therefore the

Buildroot developers have decided to simply not attempt to do this.

Instead, it is the responsibility of the user to know when a full rebuild is necessary. As a hint, here are a few rules of thumb that can help you understand how to work with Buildroot:

- When the target architecture configuration is changed, a complete rebuild is needed. Changing the architecture variant, the binary format or the floating point strategy for example has an impact on the entire system.

- When the toolchain configuration is changed, a complete rebuild generally is needed. Changing the toolchain configuration often involves changing the compiler version, the type of C library or its configuration, or some other fundamental configuration item, and these changes have an impact on the entire system.

-

When an additional package is added to the configuration, a full

rebuild is not necessarily needed. Buildroot will detect that this

package has never been built, and will build it. However, if this

package is a library that can optionally be used by packages that

have already been built, Buildroot will not automatically rebuild

those. Either you know which packages should be rebuilt, and you

can rebuild them manually, or you should do a full rebuild. For

example, let’s suppose you have built a system with the

ctorrentpackage, but withoutopenssl. Your system works, but you realize you would like to have SSL support inctorrent, so you enable theopensslpackage in Buildroot configuration and restart the build. Buildroot will detect thatopensslshould be built and will be build it, but it will not detect thatctorrentshould be rebuilt to benefit fromopensslto add OpenSSL support. You will either have to do a full rebuild, or rebuildctorrentitself. - When a package is removed from the configuration, Buildroot does not do anything special. It does not remove the files installed by this package from the target root filesystem or from the toolchain sysroot. A full rebuild is needed to get rid of this package. However, generally you don’t necessarily need this package to be removed right now: you can wait for the next lunch break to restart the build from scratch.

- When the sub-options of a package are changed, the package is not automatically rebuilt. After making such changes, rebuilding only this package is often sufficient, unless enabling the package sub-option adds some features to the package that are useful for another package which has already been built. Again, Buildroot does not track when a package should be rebuilt: once a package has been built, it is never rebuilt unless explicitly told to do so.

-

When a change to the root filesystem skeleton is made, a full

rebuild is needed. However, when changes to the root filesystem

overlay, a post-build script or a post-image script are made,

there is no need for a full rebuild: a simple

makeinvocation will take the changes into account. -

When a package listed in

FOO_DEPENDENCIESis rebuilt or removed, the packagefoois not automatically rebuilt. For example, if a packagebaris listed inFOO_DEPENDENCIESwithFOO_DEPENDENCIES = barand the configuration of thebarpackage is changed, the configuration change would not result in a rebuild of packagefooautomatically. In this scenario, you may need to either rebuild any packages in your build which referencebarin theirDEPENDENCIES, or perform a full rebuild to ensure anybardependent packages are up to date.

Generally speaking, when you’re facing a build error and you’re unsure of the potential consequences of the configuration changes you’ve made, do a full rebuild. If you get the same build error, then you are sure that the error is not related to partial rebuilds of packages, and if this error occurs with packages from the official Buildroot, do not hesitate to report the problem! As your experience with Buildroot progresses, you will progressively learn when a full rebuild is really necessary, and you will save more and more time.

For reference, a full rebuild is achieved by running:

$ make clean all

One of the most common questions asked by Buildroot users is how to rebuild a given package or how to remove a package without rebuilding everything from scratch.

Removing a package is unsupported by Buildroot without

rebuilding from scratch. This is because Buildroot doesn’t keep track

of which package installs what files in the output/staging and

output/target directories, or which package would be compiled differently

depending on the availability of another package.

The easiest way to rebuild a single package from scratch is to remove

its build directory in output/build. Buildroot will then re-extract,

re-configure, re-compile and re-install this package from scratch. You

can ask buildroot to do this with the make <package>-dirclean command.

On the other hand, if you only want to restart the build process of a

package from its compilation step, you can run make <package>-rebuild. It

will restart the compilation and installation of the package, but not from

scratch: it basically re-executes make and make install inside the package,

so it will only rebuild files that changed.

If you want to restart the build process of a package from its configuration

step, you can run make <package>-reconfigure. It will restart the

configuration, compilation and installation of the package.

While <package>-rebuild implies <package>-reinstall and

<package>-reconfigure implies <package>-rebuild, these targets as well

as <package> only act on the said package, and do not trigger re-creating

the root filesystem image. If re-creating the root filesystem in necessary,

one should in addition run make or make all.

Internally, Buildroot creates so-called stamp files to keep track of

which build steps have been completed for each package. They are

stored in the package build directory,

output/build/<package>-<version>/ and are named

.stamp_<step-name>. The commands detailed above simply manipulate

these stamp files to force Buildroot to restart a specific set of

steps of a package build process.

Further details about package special make targets are explained in Section 8.14.5, “Package-specific make targets”.

If you intend to do an offline build and just want to download all sources that you previously selected in the configurator (menuconfig, nconfig, xconfig or gconfig), then issue:

$ make source

You can now disconnect or copy the content of your dl

directory to the build-host.

As default, everything built by Buildroot is stored in the directory

output in the Buildroot tree.

Buildroot also supports building out of tree with a syntax similar to

the Linux kernel. To use it, add O=<directory> to the make command

line:

$ make O=/tmp/build menuconfig

Or:

$ cd /tmp/build; make O=$PWD -C path/to/buildroot menuconfig

All the output files will be located under /tmp/build. If the O

path does not exist, Buildroot will create it.

Note: the O path can be either an absolute or a relative path, but if it’s

passed as a relative path, it is important to note that it is interpreted

relative to the main Buildroot source directory, not the current working

directory.

When using out-of-tree builds, the Buildroot .config and temporary

files are also stored in the output directory. This means that you can

safely run multiple builds in parallel using the same source tree as

long as they use unique output directories.

For ease of use, Buildroot generates a Makefile wrapper in the output

directory - so after the first run, you no longer need to pass O=<…>

and -C <…>, simply run (in the output directory):

$ make <target>

Buildroot also honors some environment variables, when they are passed

to make or set in the environment:

-

HOSTCXX, the host C++ compiler to use -

HOSTCC, the host C compiler to use -

UCLIBC_CONFIG_FILE=<path/to/.config>, path to the uClibc configuration file, used to compile uClibc, if an internal toolchain is being built. Note that the uClibc configuration file can also be set from the configuration interface, so through the Buildroot.configfile; this is the recommended way of setting it. -

BUSYBOX_CONFIG_FILE=<path/to/.config>, path to the BusyBox configuration file. Note that the BusyBox configuration file can also be set from the configuration interface, so through the Buildroot.configfile; this is the recommended way of setting it. -

BR2_CCACHE_DIRto override the directory where Buildroot stores the cached files when using ccache. -

BR2_DL_DIRto override the directory in which Buildroot stores/retrieves downloaded files. Note that the Buildroot download directory can also be set from the configuration interface, so through the Buildroot.configfile. See Section 8.14.4, “Location of downloaded packages” for more details on how you can set the download directory. -

BR2_GRAPH_ALT, if set and non-empty, to use an alternate color-scheme in build-time graphs -

BR2_GRAPH_OUTto set the filetype of generated graphs, eitherpdf(the default), orpng. -

BR2_GRAPH_DEPS_OPTSto pass extra options to the dependency graph; see Section 8.10, “Graphing the dependencies between packages” for the accepted options -

BR2_GRAPH_DOT_OPTSis passed verbatim as options to thedotutility to draw the dependency graph. -

BR2_GRAPH_SIZE_OPTSto pass extra options to the size graph; see Section 8.12, “Graphing the filesystem size contribution of packages” for the acepted options

An example that uses config files located in the toplevel directory and in your $HOME:

$ make UCLIBC_CONFIG_FILE=uClibc.config BUSYBOX_CONFIG_FILE=$HOME/bb.config

If you want to use a compiler other than the default gcc

or g++ for building helper-binaries on your host, then do

$ make HOSTCXX=g++-4.3-HEAD HOSTCC=gcc-4.3-HEAD

Filesystem images can get pretty big, depending on the filesystem you choose, the number of packages, whether you provisioned free space… Yet, some locations in the filesystems images may just be empty (e.g. a long run of zeroes); such a file is called a sparse file.

Most tools can handle sparse files efficiently, and will only store or write those parts of a sparse file that are not empty.

For example:

taraccepts the-Soption to tell it to only store non-zero blocks of sparse files:-

tar cf archive.tar -S [files…]will efficiently store sparse files in a tarball -

tar xf archive.tar -Swill efficiently store sparse files extracted from a tarball

-

cpaccepts the--sparse=WHENoption (WHENis one ofauto,neveroralways):-

cp --sparse=always source.file dest.filewill makedest.filea sparse file ifsource.filehas long runs of zeroes

-

Other tools may have similar options. Please consult their respective man pages.

You can use sparse files if you need to store the filesystem images (e.g. to transfer from one machine to another), or if you need to send them (e.g. to the Q&A team).

Note however that flashing a filesystem image to a device while using the

sparse mode of dd may result in a broken filesystem (e.g. the block bitmap

of an ext2 filesystem may be corrupted; or, if you have sparse files in

your filesystem, those parts may not be all-zeroes when read back). You

should only use sparse files when handling files on the build machine, not

when transferring them to an actual device that will be used on the target.

Buildroot can produce a JSON blurb that describes the set of enabled

packages in the current configuration, together with their

dependencies, licenses and other metadata. This JSON blurb is produced

by using the show-info make target:

make show-info

Buildroot can also produce details about packages as HTML and JSON

output using the pkg-stats make target. Amongst other things, these

details include whether known CVEs (security vulnerabilities) affect

the packages in your current configuration. It also shows if there is

a newer upstream version for those packages.

make pkg-stats

Based on the output of show-info Buildroot can generate a SBOM in

the CycloneDX format. While it doesn’t offer any additional

information, CycloneDX is a format specification that can be consumed

by other projects.

make show-info | utils/generate-cyclonedx

For more information check the help of the generate-cyclonedx script, the

script call can be tailored to your project.

utils/generate-cyclonedx --help

Similarly to pkg-stats, CycloneDX SBOM’s can be enriched with vulnerability

analysis from the NVD database.

make show | utils/generate-cyclonedx > sbom.cdx.json cat sbom.cdx.json | support/scripts/cve-check --nvd-path dl/buildroot-nvd/

For more information about CycloneDX see https://cyclonedx.org/.

One of Buildroot’s jobs is to know the dependencies between packages, and make sure they are built in the right order. These dependencies can sometimes be quite complicated, and for a given system, it is often not easy to understand why such or such package was brought into the build by Buildroot.

In order to help understanding the dependencies, and therefore better understand what is the role of the different components in your embedded Linux system, Buildroot is capable of generating dependency graphs.

To generate a dependency graph of the full system you have compiled, simply run:

make graph-depends

You will find the generated graph in

output/graphs/graph-depends.pdf.

If your system is quite large, the dependency graph may be too complex and difficult to read. It is therefore possible to generate the dependency graph just for a given package:

make <pkg>-graph-depends

You will find the generated graph in

output/graph/<pkg>-graph-depends.pdf.

Note that the dependency graphs are generated using the dot tool

from the Graphviz project, which you must have installed on your

system to use this feature. In most distributions, it is available as

the graphviz package.

By default, the dependency graphs are generated in the PDF

format. However, by passing the BR2_GRAPH_OUT environment variable, you

can switch to other output formats, such as PNG, PostScript or

SVG. All formats supported by the -T option of the dot tool are

supported.

BR2_GRAPH_OUT=svg make graph-depends

The graph-depends behaviour can be controlled by setting options in the

BR2_GRAPH_DEPS_OPTS environment variable. The accepted options are:

-

--depth N,-d N, to limit the dependency depth toNlevels. The default,0, means no limit. -

--stop-on PKG,-s PKG, to stop the graph on the packagePKG.PKGcan be an actual package name, a glob, the keyword virtual (to stop on virtual packages), or the keyword host (to stop on host packages). The package is still present on the graph, but its dependencies are not. -

--exclude PKG,-x PKG, like--stop-on, but also omitsPKGfrom the graph. -

--transitive,--no-transitive, to draw (or not) the transitive dependencies. The default is to not draw transitive dependencies. -

--colors R,T,H, the comma-separated list of colors to draw the root package (R), the target packages (T) and the host packages (H). Defaults to:lightblue,grey,gainsboro

BR2_GRAPH_DEPS_OPTS='-d 3 --no-transitive --colors=red,green,blue' make graph-depends

When the build of a system takes a long time, it is sometimes useful to be able to understand which packages are the longest to build, to see if anything can be done to speed up the build. In order to help such build time analysis, Buildroot collects the build time of each step of each package, and allows to generate graphs from this data.

To generate the build time graph after a build, run:

make graph-build

This will generate a set of files in output/graphs :

-

build.hist-build.pdf, a histogram of the build time for each package, ordered in the build order. -

build.hist-duration.pdf, a histogram of the build time for each package, ordered by duration (longest first) -

build.hist-name.pdf, a histogram of the build time for each package, order by package name. -

build.pie-packages.pdf, a pie chart of the build time per package -

build.pie-steps.pdf, a pie chart of the global time spent in each step of the packages build process.

This graph-build target requires the Python Matplotlib and Numpy

libraries to be installed (python-matplotlib and python-numpy on

most distributions), and also the argparse module if you’re using a

Python version older than 2.7 (python-argparse on most

distributions).

By default, the output format for the graph is PDF, but a different

format can be selected using the BR2_GRAPH_OUT environment variable. The

only other format supported is PNG:

BR2_GRAPH_OUT=png make graph-build

When your target system grows, it is sometimes useful to understand how much each Buildroot package is contributing to the overall root filesystem size. To help with such an analysis, Buildroot collects data about files installed by each package and using this data, generates a graph and CSV files detailing the size contribution of the different packages.

To generate these data after a build, run:

make graph-size

This will generate:

-

output/graphs/graph-size.pdf, a pie chart of the contribution of each package to the overall root filesystem size -

output/graphs/package-size-stats.csv, a CSV file giving the size contribution of each package to the overall root filesystem size -

output/graphs/file-size-stats.csv, a CSV file giving the size contribution of each installed file to the package it belongs, and to the overall filesystem size.

This graph-size target requires the Python Matplotlib library to be

installed (python-matplotlib on most distributions), and also the

argparse module if you’re using a Python version older than 2.7

(python-argparse on most distributions).

Just like for the duration graph, a BR2_GRAPH_OUT environment variable

is supported to adjust the output file format. See Section 8.10, “Graphing the dependencies between packages”

for details about this environment variable.

Additionally, one may set the environment variable BR2_GRAPH_SIZE_OPTS

to further control the generated graph. Accepted options are:

-

--size-limit X,-l X, will group all packages which individual contribution is belowXpercent, to a single entry labelled Others in the graph. By default,X=0.01, which means packages each contributing less than 1% are grouped under Others. Accepted values are in the range[0.0..1.0]. -

--iec,--binary,--si,--decimal, to use IEC (binary, powers of 1024) or SI (decimal, powers of 1000; the default) prefixes. -

--biggest-first, to sort packages in decreasing size order, rather than in increasing size order.

Note. The collected filesystem size data is only meaningful after a complete

clean rebuild. Be sure to run make clean all before using make

graph-size.

To compare the root filesystem size of two different Buildroot compilations,

for example after adjusting the configuration or when switching to another

Buildroot release, use the size-stats-compare script. It takes two

file-size-stats.csv files (produced by make graph-size) as input.

Refer to the help text of this script for more details:

utils/size-stats-compare -h

Note. This section deals with a very experimental feature, which is known to break even in some non-unusual situations. Use at your own risk.

Buildroot has always been capable of using parallel build on a per

package basis: each package is built by Buildroot using make -jN (or

the equivalent invocation for non-make-based build systems). The level

of parallelism is by default number of CPUs + 1, but it can be

adjusted using the BR2_JLEVEL configuration option.

Until 2020.02, Buildroot was however building packages in a serial fashion: each package was built one after the other, without parallelization of the build between packages. As of 2020.02, Buildroot has experimental support for top-level parallel build, which allows some signicant build time savings by building packages that have no dependency relationship in parallel. This feature is however marked as experimental and is known not to work in some cases.

In order to use top-level parallel build, one must:

-

Enable the option

BR2_PER_PACKAGE_DIRECTORIESin the Buildroot configuration -

Use

make -jNwhen starting the Buildroot build

Internally, the BR2_PER_PACKAGE_DIRECTORIES will enable a mechanism

called per-package directories, which will have the following

effects:

-

Instead of a global target directory and a global host directory

common to all packages, per-package target and host directories

will be used, in

$(O)/per-package/<pkg>/target/and$(O)/per-package/<pkg>/host/respectively. Those folders will be populated from the corresponding folders of the package dependencies at the beginning of<pkg>build. The compiler and all other tools will therefore only be able to see and access files installed by dependencies explicitly listed by<pkg>. -

At the end of the build, the global target and host directories

will be populated, located in

$(O)/targetand$(O)/hostrespectively. This means that during the build, those folders will be empty and it’s only at the very end of the build that they will be populated.

You may want to compile, for your target, your own programs or other software that are not packaged in Buildroot. In order to do this you can use the toolchain that was generated by Buildroot.

The toolchain generated by Buildroot is located by default in

output/host/. The simplest way to use it is to add

output/host/bin/ to your PATH environment variable and then to

use ARCH-linux-gcc, ARCH-linux-objdump, ARCH-linux-ld, etc.

Alternatively, Buildroot can also export the toolchain and the development

files of all selected packages, as an SDK, by running the command

make sdk. This generates a tarball of the content of the host directory

output/host/, named <TARGET-TUPLE>_sdk-buildroot.tar.gz (which can be

overridden by setting the environment variable BR2_SDK_PREFIX) and

located in the output directory output/images/.

This tarball can then be distributed to application developers, when they want to develop their applications that are not (yet) packaged as a Buildroot package.

Upon extracting the SDK tarball, the user must run the script

relocate-sdk.sh (located at the top directory of the SDK), to make

sure all paths are updated with the new location.

Alternatively, if you just want to prepare the SDK without generating

the tarball (e.g. because you will just be moving the host directory,

or will be generating the tarball on your own), Buildroot also allows

you to just prepare the SDK with make prepare-sdk without actually

generating a tarball.

For your convenience, by selecting the option

BR2_PACKAGE_HOST_ENVIRONMENT_SETUP, you can get a

environment-setup script installed in output/host/ and therefore

in your SDK. This script can be sourced with

. your/sdk/path/environment-setup to export a number of environment

variables that will help cross-compile your projects using the

Buildroot SDK: the PATH will contain the SDK binaries, standard

autotools variables will be defined with the appropriate values, and

CONFIGURE_FLAGS will contain basic ./configure options to

cross-compile autotools projects. It also provides some useful

commands. Note however that once this script is sourced, the

environment is setup only for cross-compilation, and no longer for

native compilation.

Buildroot allows to do cross-debugging, where the debugger runs on the

build machine and communicates with gdbserver on the target to

control the execution of the program.

To achieve this:

-

If you are using an internal toolchain (built by Buildroot), you

must enable

BR2_PACKAGE_HOST_GDB,BR2_PACKAGE_GDBandBR2_PACKAGE_GDB_SERVER. This ensures that both the cross gdb and gdbserver get built, and that gdbserver gets installed to your target. -

If you are using an external toolchain, you should enable

BR2_TOOLCHAIN_EXTERNAL_GDB_SERVER_COPY, which will copy the gdbserver included with the external toolchain to the target. If your external toolchain does not have a cross gdb or gdbserver, it is also possible to let Buildroot build them, by enabling the same options as for the internal toolchain backend.

Now, to start debugging a program called foo, you should run on the

target:

gdbserver :2345 foo

This will cause gdbserver to listen on TCP port 2345 for a connection

from the cross gdb.

Then, on the host, you should start the cross gdb using the following command line:

<buildroot>/output/host/bin/<tuple>-gdb -ix <buildroot>/output/staging/usr/share/buildroot/gdbinit foo

Of course, foo must be available in the current directory, built

with debugging symbols. Typically you start this command from the

directory where foo is built (and not from output/target/ as the

binaries in that directory are stripped).

The <buildroot>/output/staging/usr/share/buildroot/gdbinit file will tell the

cross gdb where to find the libraries of the target.

Finally, to connect to the target from the cross gdb:

(gdb) target remote <target ip address>:2345

ccache is a compiler cache. It stores the object files resulting from each compilation process, and is able to skip future compilation of the same source file (with same compiler and same arguments) by using the pre-existing object files. When doing almost identical builds from scratch a number of times, it can nicely speed up the build process.

ccache support is integrated in Buildroot. You just have to enable

Enable compiler cache in Build options. This will automatically

build ccache and use it for every host and target compilation.

The cache is located in the directory defined by the BR2_CCACHE_DIR

configuration option, which defaults to

$HOME/.buildroot-ccache. This default location is outside of

Buildroot output directory so that it can be shared by separate

Buildroot builds. If you want to get rid of the cache, simply remove

this directory.

You can get statistics on the cache (its size, number of hits,

misses, etc.) by running make ccache-stats.

The make target ccache-options and the CCACHE_OPTIONS variable

provide more generic access to the ccache. For example

# set cache limit size make CCACHE_OPTIONS="--max-size=5G" ccache-options # zero statistics counters make CCACHE_OPTIONS="--zero-stats" ccache-options

ccache makes a hash of the source files and of the compiler options.

If a compiler option is different, the cached object file will not be

used. Many compiler options, however, contain an absolute path to the

staging directory. Because of this, building in a different output

directory would lead to many cache misses.

To avoid this issue, buildroot has the Use relative paths option

(BR2_CCACHE_USE_BASEDIR). This will rewrite all absolute paths that

point inside the output directory into relative paths. Thus, changing

the output directory no longer leads to cache misses.

A disadvantage of the relative paths is that they also end up to be relative paths in the object file. Therefore, for example, the debugger will no longer find the file, unless you cd to the output directory first.

See the ccache manual’s section on "Compiling in different directories" for more details about this rewriting of absolute paths.

When ccache is enabled in Buildroot using the BR2_CCACHE=y option:

-

ccacheis used during the Buildroot build itself -

ccacheis not used when building outside of Buildroot, for example when directly calling the cross-compiler or using the SDK

One can override this behavior using the BR2_USE_CCACHE environment

variable: when set to 1, usage of ccache is enabled (default during

the Buildroot build), when unset or set to a value different from 1,

usage of ccache is disabled.

The various tarballs that are downloaded by Buildroot are all stored

in BR2_DL_DIR, which by default is the dl directory. If you want

to keep a complete version of Buildroot which is known to be working

with the associated tarballs, you can make a copy of this directory.

This will allow you to regenerate the toolchain and the target

filesystem with exactly the same versions.

If you maintain several Buildroot trees, it might be better to have a

shared download location. This can be achieved by pointing the

BR2_DL_DIR environment variable to a directory. If this is

set, then the value of BR2_DL_DIR in the Buildroot configuration is

overridden. The following line should be added to <~/.bashrc>.

export BR2_DL_DIR=<shared download location>

The download location can also be set in the .config file, with the

BR2_DL_DIR option. Unlike most options in the .config file, this value

is overridden by the BR2_DL_DIR environment variable.

Running make <package> builds and installs that particular package

and its dependencies.

For packages relying on the Buildroot infrastructure, there are numerous special make targets that can be called independently like this:

make <package>-<target>

The package build targets are (in the order they are executed):

| command/target | Description |

|---|---|

| Fetch the source (download the tarball, clone the source repository, etc) |

| Build and install all dependencies required to build the package |

| Put the source in the package build directory (extract the tarball, copy the source, etc) |

| Apply the patches, if any |

| Run the configure commands, if any |

| Run the compilation commands |

| target package: Run the installation of the package in the staging directory, if necessary |

| target package: Run the installation of the package in the target directory, if necessary |

| target package: Run the 2 previous installation commands host package: Run the installation of the package in the host directory |

Additionally, there are some other useful make targets:

| command/target | Description |

|---|---|

| Displays the first-order dependencies required to build the package |

| Recursively displays the dependencies required to build the package |

| Displays the first-order reverse dependencies of the package (i.e packages that directly depend on it) |

| Recursively displays the reverse dependencies of the package (i.e the packages that depend on it, directly or indirectly) |

| Generate a dependency graph of the package, in the context of the current Buildroot configuration. See this section for more details about dependency graphs. |

| Generate a graph of this package reverse dependencies (i.e the packages that depend on it, directly or indirectly) |

| Generate a graph of this package in both directions (i.e the packages that depend on it and on which it depends, directly or indirectly) |

| Remove the whole package build directory |

| Re-run the install commands |

| Re-run the compilation commands - this only makes

sense when using the |

| Re-run the configure commands, then rebuild - this only

makes sense when using the |

The normal operation of Buildroot is to download a tarball, extract

it, configure, compile and install the software component found inside

this tarball. The source code is extracted in

output/build/<package>-<version>, which is a temporary directory:

whenever make clean is used, this directory is entirely removed, and

re-created at the next make invocation. Even when a Git or

Subversion repository is used as the input for the package source

code, Buildroot creates a tarball out of it, and then behaves as it

normally does with tarballs.

This behavior is well-suited when Buildroot is used mainly as an integration tool, to build and integrate all the components of an embedded Linux system. However, if one uses Buildroot during the development of certain components of the system, this behavior is not very convenient: one would instead like to make a small change to the source code of one package, and be able to quickly rebuild the system with Buildroot.

Making changes directly in output/build/<package>-<version> is not

an appropriate solution, because this directory is removed on make

clean.

Therefore, Buildroot provides a specific mechanism for this use case:

the <pkg>_OVERRIDE_SRCDIR mechanism. Buildroot reads an override

file, which allows the user to tell Buildroot the location of the

source for certain packages.

The default location of the override file is $(CONFIG_DIR)/local.mk,

as defined by the BR2_PACKAGE_OVERRIDE_FILE configuration option.

$(CONFIG_DIR) is the location of the Buildroot .config file, so

local.mk by default lives side-by-side with the .config file,

which means:

-

In the top-level Buildroot source directory for in-tree builds

(i.e., when

O=is not used) -

In the out-of-tree directory for out-of-tree builds (i.e., when

O=is used)

If a different location than these defaults is required, it can be

specified through the BR2_PACKAGE_OVERRIDE_FILE configuration

option.

In this override file, Buildroot expects to find lines of the form:

<pkg1>_OVERRIDE_SRCDIR = /path/to/pkg1/sources <pkg2>_OVERRIDE_SRCDIR = /path/to/pkg2/sources

For example:

LINUX_OVERRIDE_SRCDIR = /home/bob/linux/ BUSYBOX_OVERRIDE_SRCDIR = /home/bob/busybox/

When Buildroot finds that for a given package, an

<pkg>_OVERRIDE_SRCDIR has been defined, it will no longer attempt to

download, extract and patch the package. Instead, it will directly use

the source code available in the specified directory and make clean

will not touch this directory. This allows to point Buildroot to your

own directories, that can be managed by Git, Subversion, or any other

version control system. To achieve this, Buildroot will use rsync to

copy the source code of the component from the specified

<pkg>_OVERRIDE_SRCDIR to output/build/<package>-custom/.

This mechanism is best used in conjunction with the make

<pkg>-rebuild and make <pkg>-reconfigure targets. A make

<pkg>-rebuild all sequence will rsync the source code from

<pkg>_OVERRIDE_SRCDIR to output/build/<package>-custom (thanks to

rsync, only the modified files are copied), and restart the build

process of just this package.

In the example of the linux package above, the developer can then

make a source code change in /home/bob/linux and then run:

make linux-rebuild all

and in a matter of seconds gets the updated Linux kernel image in

output/images. Similarly, a change can be made to the BusyBox source

code in /home/bob/busybox, and after:

make busybox-rebuild all

the root filesystem image in output/images contains the updated

BusyBox.

Source trees for big projects often contain hundreds or thousands of

files which are not needed for building, but will slow down the process

of copying the sources with rsync. Optionally, it is possible define

<pkg>_OVERRIDE_SRCDIR_RSYNC_EXCLUSIONS to skip syncing certain files

from the source tree. For example, when working on the webkitgtk

package, the following will exclude the tests and in-tree builds from

a local WebKit source tree:

WEBKITGTK_OVERRIDE_SRCDIR = /home/bob/WebKit

WEBKITGTK_OVERRIDE_SRCDIR_RSYNC_EXCLUSIONS = \

--exclude JSTests --exclude ManualTests --exclude PerformanceTests \

--exclude WebDriverTests --exclude WebKitBuild --exclude WebKitLibraries \